Breaking news: Scaling will never get us to AGI

A new result casts serious doubt on the viability of scaling

Neural networks (at least in the configurations that have been dominant over the last three decades) have trouble generalizing beyond the multidimensional space that surrounds their training examples. That limits their ability to reason and plan reliably. It also drives their greediness with data, and even the ethical choices their developers have been making. There will never be enough data; there will always be outliers. This is why driverless cars are still just demos, and why LLMs will never be reliable.

I have said this so often in so many ways, going back to 1998, that today I am going to let someone else, Chomba Bupe, a sharp-thinking tech entrepreneur/computer vision researcher from Zambia, take a shot.

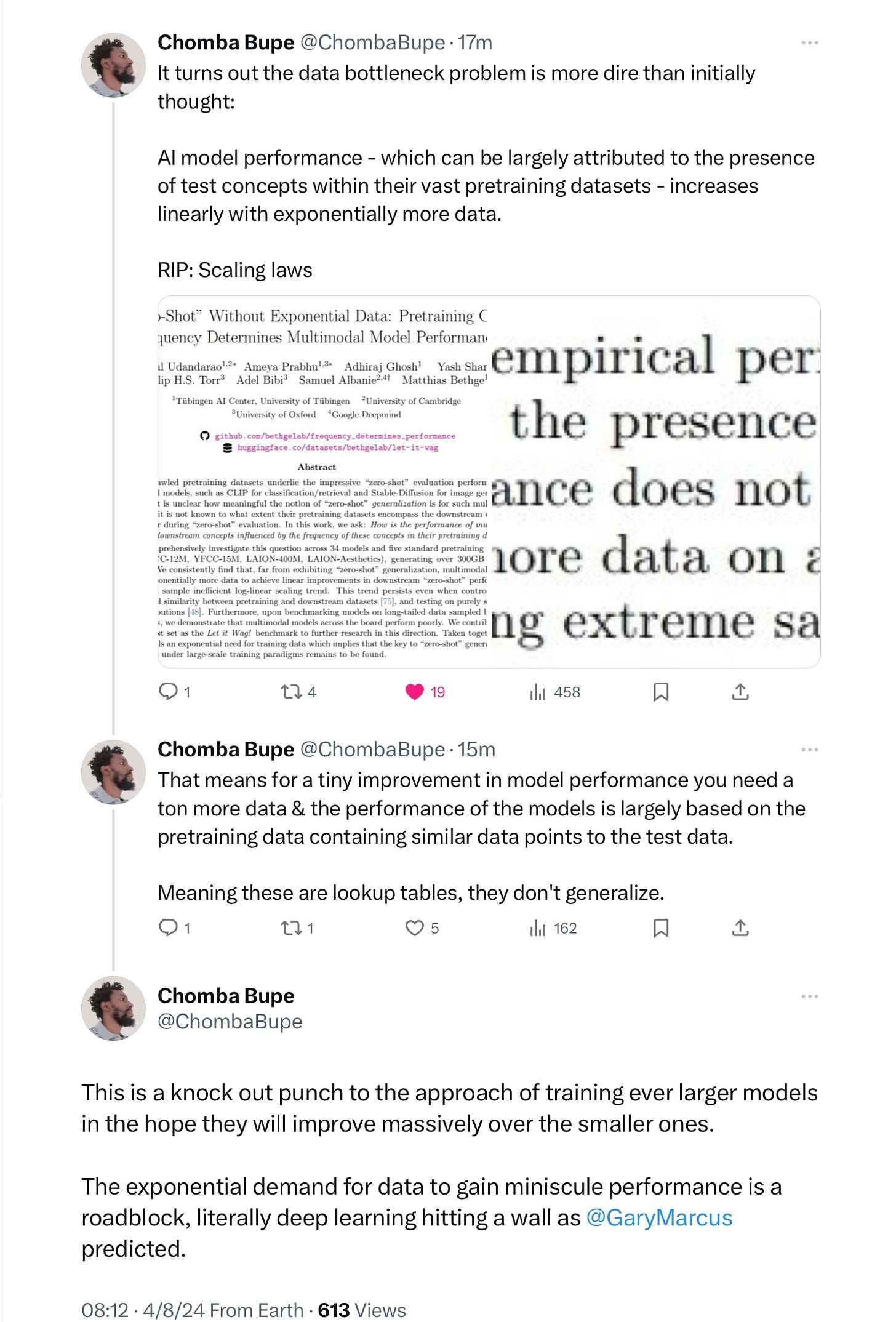

An important new preprint that just came out on data and scaling. Bupe explains it well:

In short, diminishing returns are what we can expect. (Asterisk: current models aren’t literally look up tables; they can generalize to some degree, but not enough. As I explained in the 1998 paper and in The Algebraic Mind they can generalize within a space of training examples but face considerable trouble beyond that space.)

Sooner or later, the exponential greed for data will exceed what is available.

To get to AGI, we need alternative approaches that can generalize better beyond the data on which they have been trained. End of story.

Gary Marcus looks forward to watching what happens as people begin to take in the implications.

In his book 'The myth of AI' (2021), Erik Larson argued that it is precisely the pursuit of Big Data(sets) that has been hindering real progress towards AGI. Interesting to see this convergent argument.

Hi Gary are you going to write about Devin? Quite a few people saying its a scam in the last few days.