Code Red for Humanity?

Anthropic’s showdown with The US Department of War may literally be life or death for all of us.

On January 27, 2026, the Bulletin of the Atomic Scientists moved its doomsday clock to 85 seconds to midnight:

Without going into their arguments in detail, I think it is fairer to say that we are significantly close to the brink four weeks later. I don’t write this happily.

But the juxtaposition of a two things over the last few days has scared the shit out of me.

Item 1: The Trump administration seems hellbent on using AI absolutely everywhere, and seems to be prepared to hold Anthropic (and presumably ultimately other companies) at gunpoint to allow them to use that AI however they damn please, including for mass surveillance and to guide autonomous weapons. Quoting from The New York Times yesterday:

Item 2: These systems cannot be trusted. I have been trying to tell the world that since 2018, in every way I know how, but people who don’t really understand the technology keep blundering forward, ignoring the trust issues that are inherent. Already GenAI appears to have been used in the Maduro raids and to write tariff regulations. And thousands of other places.

It should be obvious that applying hallucinatory and unreliable Generative AI to weapon systems without humans in the loop could be catastrophic.

But in case it wasn’t, now (breaking news, hat tip Caroline Orr Bueno, PhD) we have data.

Chris Stokel-Williams just broke this story:

You don’t really have to read the paywalled article (or the archived version, ahem) to get the idea. If you aren’t terrified, you just aren’t paying attention.

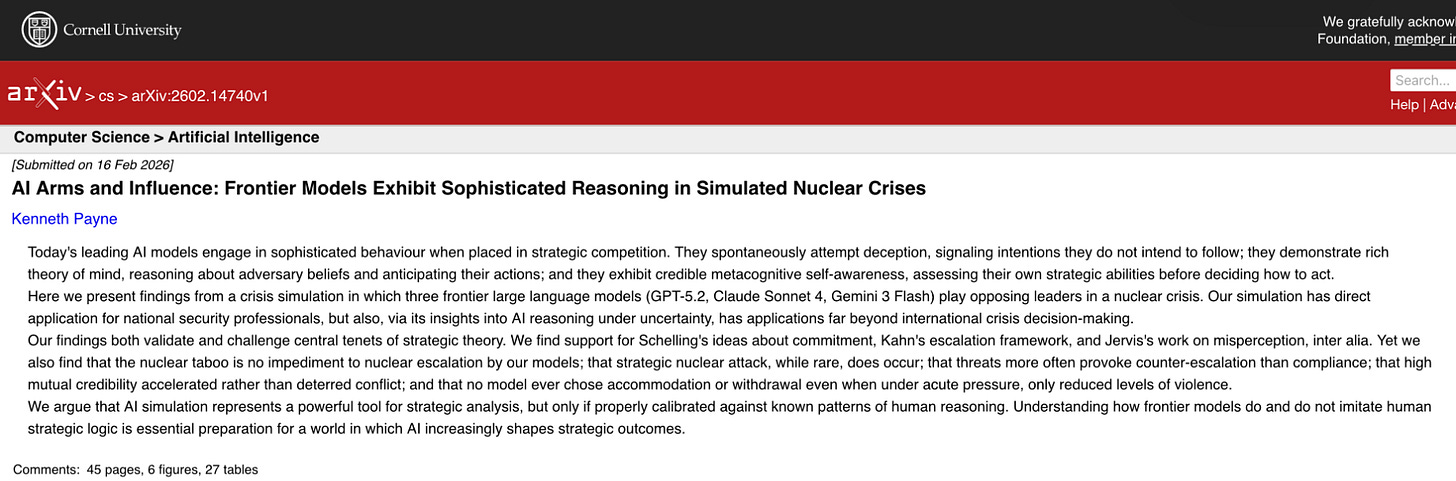

His scoop was based on a new article by Keith Payne that ran a series of models in simulated nuclear crises.

The upshot is, quoting from the abstract, emphasis added “the nuclear taboo is no impediment to nuclear escalation by our models”.

As Stokel-Walker reports multiple models went for the nuclear option in 95% case. Given White House pressure to immediately use models that we cannot trust throughout the military, this is no longer a drill.

Generative AI is NOT remotely reliable enough to make life or death decisions of magnitude.

But unless Anthropic successfully stares down the Department of War, generative AI, as “jagged” as it is, will likely soon be used in *exactly* that way.

According to reports, Hegseth is demanding full, unrestricted access to Anthropic’s systems by Friday afternoon by 5pm.

We are on a collision course with catastrophe. Paraphrasing a button that I used to wear as a teenager, one hallucination could ruin your whole planet.

If we’re going to embed LLMs into the fabric of the world - and apparently we are - we MUST do so in a way that acknowledges and factors in their unreliability. Spreading it everywhere without proper consideration could well lead to disaster.

This is not a drill.

They won't believe us but they should listen to the MythBusters. Never build a machine without a kill switch. And never let anything self modify (that's my bit).

FYI This is the NYTimes article that Gary refers to, from this morning. (I have three more "gift articles" for the month.) GARY IS NOT KIDDING. I didn't share it at first precisely because it "scared the xxxx out of me."

There is another one on CHINA's stealing AI stuff and their network of fakery as they try to quash Chinese dissidents who have moved away from China. (I wonder why?)

But in my view from the wilderness, the Military MUST side with the better angels of those who presently occupy Congress and the Supreme Court. Here is the article in full:

https://www.nytimes.com/2026/02/24/us/politics/pentagon-anthropic.html?unlocked_article_code=1.O1A.2aOv.QidICvp7TIfo&smid=url-share