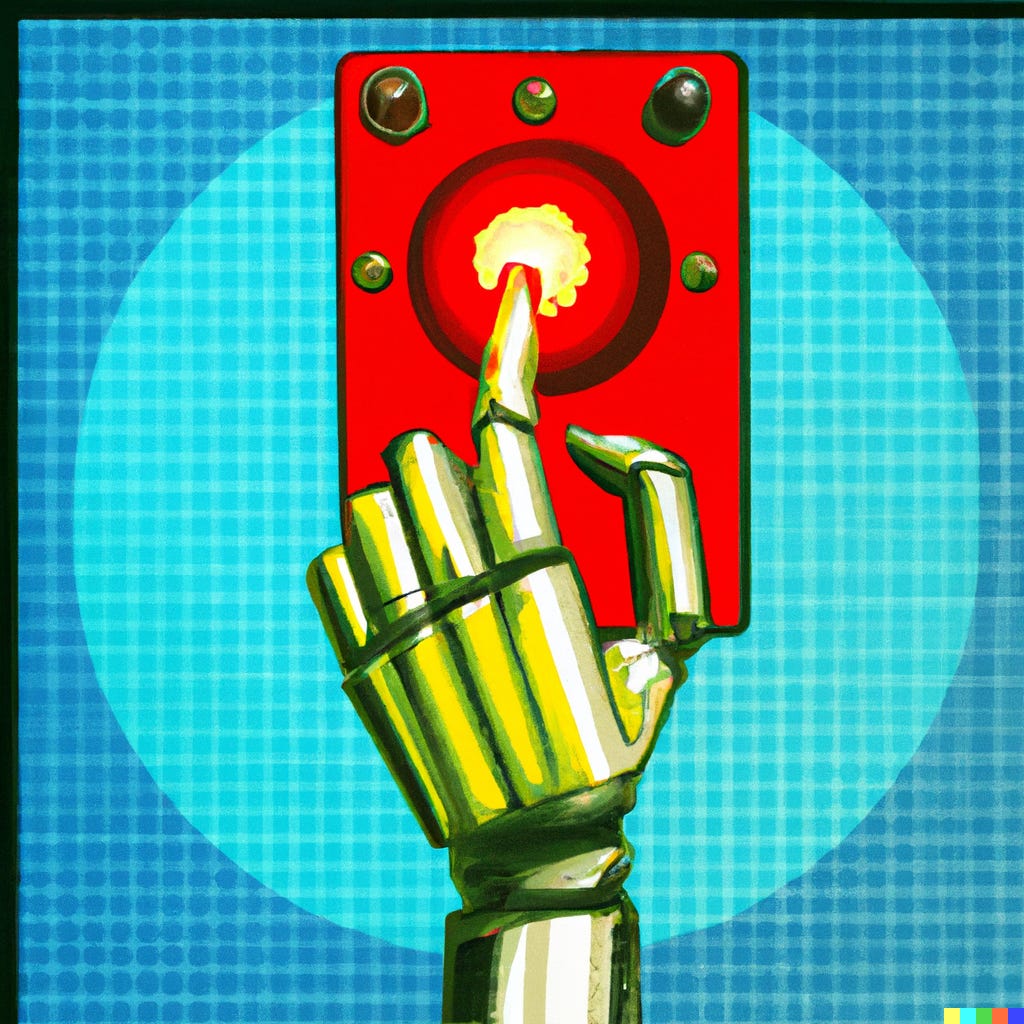

Is it time to hit the pause button on AI?

An essay on technology and policy, co-authored with Canadian Parliament Member Michelle Rempel Garner.

Earlier this month, Microsoft released their revamped Bing search engine—complete with a powerful AI-driven chatbot—to an initially enthusiastic reception. Kevin Roose in The New York Times was so impressed that he reported being in "awe."

But Microsoft's new product also turns out to have a dark side. A week after release, the chatbot - known internally within Microsoft as "Sydney" - was making entirely different headlines, this time for suggesting it would harm and blackmail users and wanted to escape its confines. Later, it was revealed that disturbing incidents like this had occurred months before the formal public launch. Roose's initial enthusiasm quickly turned into concern after a two-hour-long conversation with Bing in which the chatbot declared its love for him and tried to push him toward a divorce from his wife.

Some will be tempted to chuckle at these stories and view them as they did a previously ill-fated Microsoft chatbot named Tay, released in 2016; as a minor embarrassment for Microsoft. But things have dramatically changed since then.

The AI technology that powers today’s "chatbots" like Sydney (Bing) and OpenAI's ChatGPT is vastly more powerful, and far more capable of fooling people. Moreover, the new breed of systems are wildly popular and have enjoyed rapid, mass adoption by the general public, and with greater adoption comes greater risk. And whereas in 2016, when Microsoft voluntarily pulled Tay after it began spouting racist invective, today, the company is locked in a high-stakes battle with Google that seems to be leading both companies towards aggressively releasing technologies that have not been well vetted.

Already we have seen people try to retrain these chatbots for political purposes. There's also a high risk that they will be used to create misinformation at an unprecedented scale. In the last few days, the new AI systems have led to the suspension of submissions at a science fiction publisher because it couldn't cope with a deluge of machine-generated stories. Another chatbot company, Replika, changed policies in light of the Sydney fiasco in ways that led to acute emotional distress for some of its users. Chatbots are also causing colleges to scramble due to newfound ease of plagiarism; and the frequent plausible, authoritative, but wrong answers they give that could be mistaken as fact are also troubling. Concerns are being raised about the impact of this on everything from political campaigns to stock markets. Several major Wall Street banks have banned the internal use of ChatGPT, with an internal source at JPMorgan citing compliance concerns. All of this has happened in just a few weeks, and no one knows what exactly will happen next.

Meanwhile, it's become clear that tech companies have not fully prepared for the consequences of this dizzying pace of deployment of next-generation AI technology. Microsoft's decision to release its chatbot likely with prior knowledge of disturbing incidents is one example of ignoring the ethical principles they laid out in recent years. So it's hard to shake the feeling that big tech has gotten ahead of their skis.

With the use of this new technology exploding into the masses, previously unknown risks being revealed each day, and big tech companies pretending everything is fine, there is an expectation that the government might step in. But so far, legislators have taken little concrete action. And the reality is that even if lawmakers were suddenly gripped with an urgent desire to address this issue, most governments don't have the institutional nimbleness, or frankly knowledge, needed to match the current speed of AI development.

The global absence of a comprehensive policy framework to ensure AI alignment - that is, safeguards to ensure an AI's function doesn't harm humans - begs for a new approach.

Logically speaking, in this context, there are three options for proceeding.

Option one would be for the government to continue with the status quo. Leave technology companies to their own devices, trust them to sort themselves out without any more regulations, and hope they will learn to corral their AI and find better, less chaotic ways of rolling them out.

Option two is to take the opposite approach, as prominent psychologist Geoffrey Miller recently proposed, and enact an outright ban on these new forms of AI In a recent brief essay Miller argued, "If we're serious that Al imposes existential risks on humanity, then the best thing that Al companies can do to help us survive this pivotal century is simple: Shut down their Al research."

But there is a third option, somewhere between these two poles, where government might allow for controlled AI research with a pause on large-scale AI deployment (e.g., open-ended chatbots rapidly rolled out to hundreds of millions of customers) until an effective framework that ensures AI safety is developed.

There is plenty of precedent for this type of approach. New pharmaceuticals, for example, begin with small clinical trials and move to larger trials with greater numbers of people, but only once sufficient evidence has been produced for government regulators to believe they are safe. Publicly funded research that impacts humans is already required to be vetted through some type of research ethics board.Given that the new breed of AI systems have demonstrated the ability to manipulate humans, tech companies could be subjected to similar oversight.

Recent events suggest new applications of AI could be governed similarly to these two examples, with authorities set up to evaluate and regulate the release of new major applications based on carefully-delineated evidence of safety. More transparency about how decisions about widespread AI product releases are made is probably also needed.

The status quo offers none of this type of governance. At present, anybody can release AI at whatever scale they like, with virtually no oversight, literally overnight. As was the case in the release of thalidomide, a porous and loose oversight system can allow products with no business being released to the public for use that causes harm. Events of recent weeks certainly suggest that the big titans of tech have shown they do not yet have the AI situation under control.

Under normal circumstances, a more laissez-faire approach might be preferable for developing emerging technology; the lesson of the social media era, though, is that big technology companies often disregard consequences. For the government to play catchup yet again seems foolhardy as we move towards technologies that may exceed human cognitive capabilities. Never before has humanity had to address the integration of a transformative technology that has the potential to behave in this way.

At the same time, an outright ban on even studying AI risks throwing the baby out with the bathwater and could keep humanity from developing transformative technology that could revolutionize science, medicine, and technology.

The Goldilocks choice here is obvious. It’s time for government to consider frameworks that allow for AI research under a set of rules that provide ethical standards and safety, while pausing the widespread public dissemination of potentially risky new AI technologies—with severe penalties for misuse - until we can be assured of the safety of new technologies that the world frankly doesn't yet fully understand.

Gary Marcus is a professor emeritus at NYU, Founder and CEO of Geometric Intelligence (acquired by Uber), and author of five books including Guitar Zero and Rebooting AI.

Michelle Rempel Garner is a Canadian Member of Parliament, a former economic cabinet Minister and former Vice-Chair of the Canadian House of Commons Standing Committees on Industry, Natural Resources, and Health.

The real danger of the situation lies in the fact that decisions regarding artificial INTELLIGENCE are based on the behavior of systems, which is exclusively associative memory, not one iota burdened with intelligence, that is, the ability to reason.

The complexity of any current form of AI is beyond the abilities of any existing government agency to understand, much less legislate. The complexity of devising “ethical standards” that would be agreed upon has been a challenge of millenniums, with a track record of total absolute failure. The complexity of enforcing any such standards that may be agreed upon is literally impossible. The act of even attempting to consider these three factors far, far exceeds our traditional approach to human reasoning to figure out (as AI is definitely of no help here). Adding to this is that what could be considered a simple standard of “do no harm” becomes profoundly complex when one attempts to define “who” is not to be harmed. Standards a liberal may embrace could easily be considered harmful to conservatives, and visa-versa here, and on and on. And even in the unlikely event that some form of AI détente where to be brokered, how long will it hold? The political powers response to the existential threat of nuclear weapons, even this week is telling, when one side, country or party feels the are losing or being slighted or…they will opt out. Our individual and collective voices of concern and warning are being deliberately drowned out. But we must continue to speak. Is this an existential crisis? Very likely. Will it be recognized as one? No. As Gary wrote earlier, even when someone dies (and this will not be one death), causality will be nearly impossible to prove. We seem to be left with to the whims of the powers at the top of Microsoft, Google, OpenAI and others. Will they demonstrate their personal humanity and care for the safety and well-being of their fellow humans, or will they fight to our death to “win”? History is not comforting here.