Retired US Air Force General Jack Shanahan on the Anthropic-Pentagon tensions

”No LLM, anywhere, in its current form, should be considered for use in a fully lethal autonomous weapon system. It's ludicrous even to suggest it.”

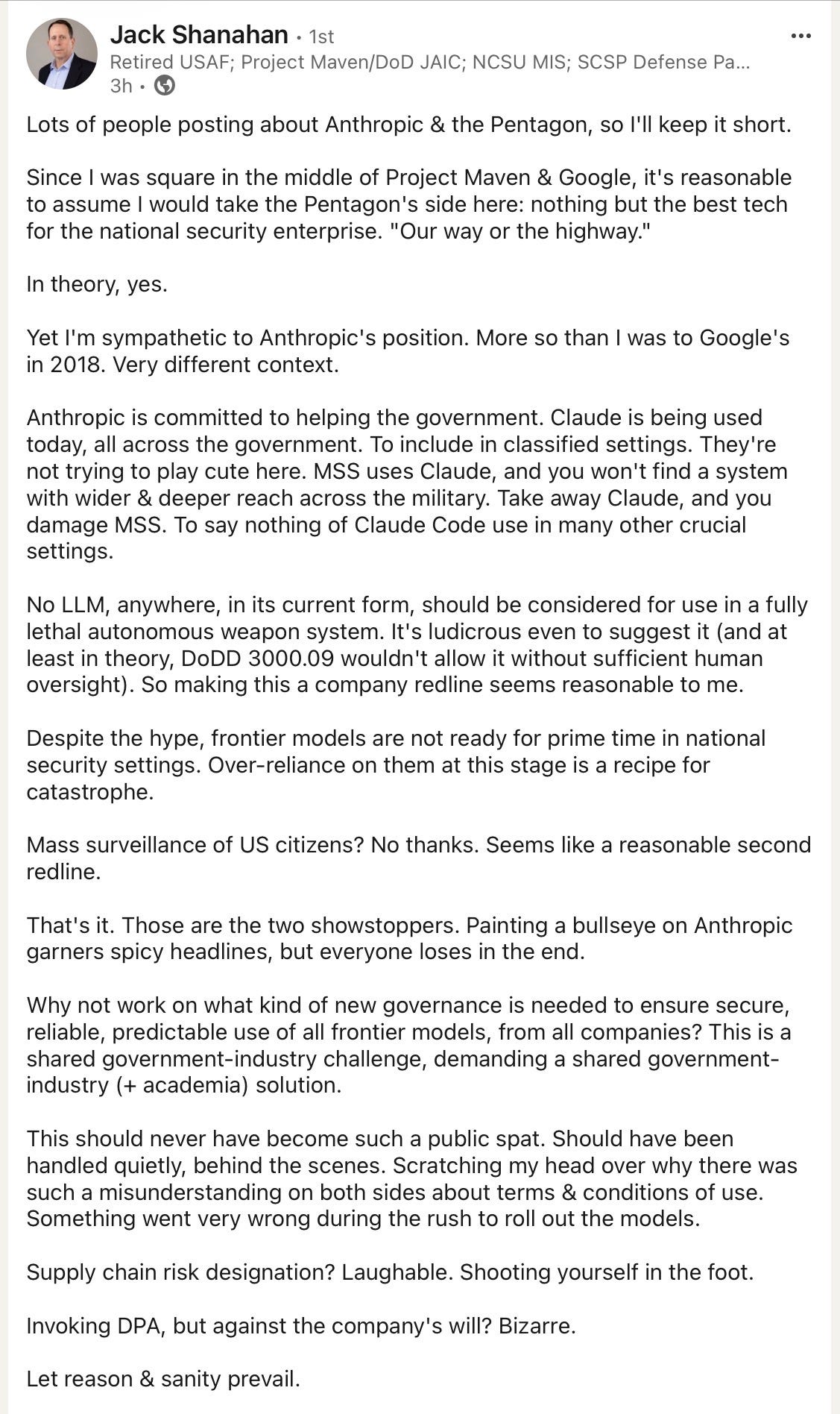

Important comments from Jack Shanahan, a retired US Air Force General who was first Director of the first Department of Defense Joint Artificial Intelligence Center, reprinted from LinkedIn with his permission:

“Let reason and sanity prevail”, indeed.

“Let reason and sanity prevail“ - you're asking way too much of the Trump administration there.

He also had perceptive comments on Yudkowsky & Soares' (2025) book "If Anyone Builds It, Everyone Dies":

"While I'm skeptical that the current trajectory of AI development will lead to human extinction, I acknowledge that this view may reflect a failure of imagination on my part. Regardless of where one stands on Yudkowsky and Soares's central argument, this book makes a valuable contribution by reminding us, once again, that every transformative technology in history has carried both promise and peril. Given AI's exponential pace of change, there's no better time to take prudent steps to guard against worst-case outcomes. The authors offer important proposals for global guardrails and risk mitigation that deserve serious consideration."

— Lieutenant General John N.T. "Jack" Shanahan (USAF, Ret.),

Inaugural Director, Department of Defense Joint AI Center