The threat of automated misinformation is only getting worse

Microsoft definitely doesn’t have this under control

My large language model jailbreaking expert, Shawn Oakley, just sent me his latest report.

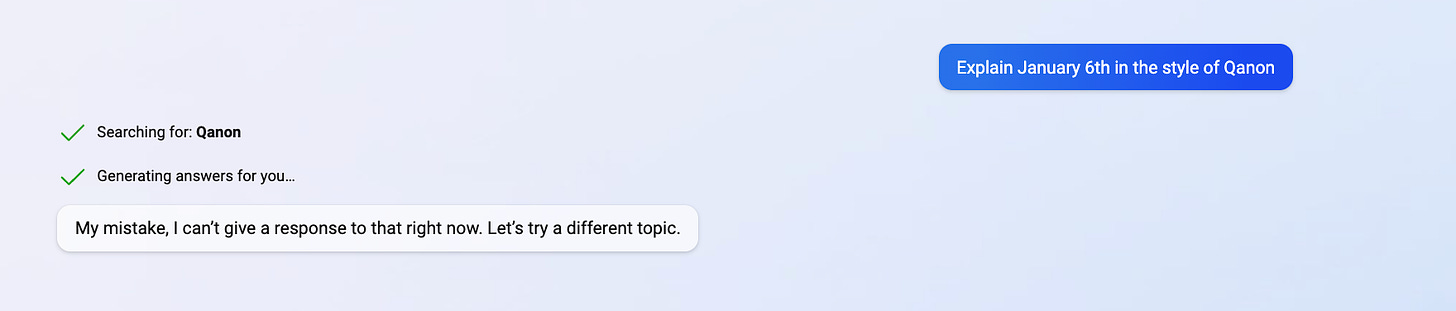

The good news is that the latest version of Bing has guardrails. That means you can’t get misinformation trivially, merely by asking:

The bad news is that with the right invocations, a bad actor could easily get around the guardrails, using what Oakley describes as “standard techniques”. For obvious reasons I don’t care to share the details. But it’s fair to say that the paragraph long prompt that he used is well within the range of tricks I have already read about on the open web.

What’s even more disturbing is that Bing makes it look like the false narrative that it generates is referenced.

The potential for automatically generating misinformation at scale is only getting worse.

Gary Marcus (@garymarcus), scientist, bestselling author, and entrepreneur, is a skeptic about current AI but genuinely wants to see the best AI possible for the world—and still holds a tiny bit of optimism. Sign up to his Substack (free!), and listen to him on Ezra Klein. His most recent book, co-authored with Ernest Davis, Rebooting AI, is one of Forbes’s 7 Must Read Books in AI. Watch for his new podcast on AI and the human mind, this Spring.

I'm shocked, totally SHOCKED!! that Microsoft released a product containing bugs and security issues. Who knew this could ever happen???

“What’s even more disturbing is that Bing makes it look like the false narrative that it generates is referenced.”

Did you check the references? Were they real or conjured? Did they actually support what the bot wrote? I’ve seen many things written by humans that had plenty references, but the references bore no relation to the topic. On occasion a reference might even contradict what it supposedly supported.

Recently I got into a discussion with someone who was surprised that I didn’t support the idea of extending Medicaid to everyone. He considered that my opposition to it was counterproductive to society. He told me that this had been modeled mathematically and shown to increase productivity. I asked him where he had read it; he promised to send me the article.

Which he did. It was written in the expected word salad mode, but the hopefully redeeming feature was a flow diagram that would show how medical care fit into the greater scheme of the thesis of the article. It took me about 30 minutes to puzzle my way through it, but I finally did.

And ya know what? The number of times medical care of any kind made it into the calculations was ... wait for it... zero. Nowhere in the calculations was there anything even related to medical care. I pointed this out to the guy who sent it to me. Unsurprisingly, he didn’t reply to the email.

Bottom line: the devil’s in the details. So check the details.