Three baffling claims about AI and machine learning in four days, statistical errors in top journals, and claims from Yann LeCun that you should not believe.

The trouble with too much benefit of the doubt

Something is in the air. It never surprises me when The New York Times says that a revolution is coming, and the promised revolution doesn’t materialize. That’s been happening for a long time. Decades, in fact.

Consider, for example, what John Markoff said in 2011 about IBM Watson.

“For I.B.M., the showdown was not merely a well-publicized stunt and a $1 million prize, but proof that the company has taken a big step toward a world in which intelligent machines will understand and respond to humans, and perhaps inevitably, replace some of them.”

That hasn’t come to pass. Eleven years later, comprehension is still lacking (see any of my recent essays here) and very few if any jobs have actually been replaced by AI. Every truck I know is still driven by a person (except in some limited-use test pilots), and no radiologists have yet been replaced. Watson itself was recently sold for parts.

Then again, the Times first said neural networks were on the verge of solving AI in 1958; forecasting AI just doesn’t seem to be the Times’ strong point. Fine.

But this last few days I have seen a whole bunch of similarly overenthused claims from serious researchers that ought to know better.

Example one, the least objectionable of the three, but nonetheless a sign of the overgenerous times, came from Stanford economist Erik Brynjolfsson:

Brynjolfsson is totally correct that human intelligence is a very narrow slice of the space of possible intelligences (that’s a point Chomsky has been making about human language since before I was born). Undoubtedly clever intelligences than ours are possible and may yet materialize.

But—hold up—what the heck is the hedging word probably doing here, even in parentheses?

Any normal 5-year-old can hold a conversation about just about anything in a native language that they have acquired language more or less from scratch just a couple years earlier, climb an unfamiliar jungle gym, follow the plot of a new cartoon, or acquire the rules of new card games verbally, without tens of thousands of trials, etc, pretty much endlessly. Human children are constantly learning new things, often from tiny amounts of data. There is literally nothing like that in the AI world.

Hedging with probably makes it sounds like we think there is a viable competitor out there for human general intelligence in the AI world. There isn’t.1 It would be like me saying Serena Williams could probably beat me in tennis.

§

Yann LeCun meanwhile has been issuing a series of puzzling tweets claiming that convnets, which he invented, (“or whatever”), can solve pretty much everything, which isn’t true and ostensibly contradicts what he himself told ZDNet a couple weeks ago. But wait, it gets worse. LeCun went on to write the following, which really left me scratching my head:

Well, no. Augmentation is way easier, because you don’t have to solve the whole job. A calculator augments an accountant; it doesn’t figure out what is deductible or where there might be a loophole in a tax code. We know how to build machines that do math (augmentation); we don’t know how to build machines that can read tax codes (replacement).

Or consider radiology:

Medical AI overwhelmingly and unanimously weighed on my side of the argument:

Just because AI can solve some aspects of radiology doesn’t mean by any stretch of the imagination that they can solve all aspects; Jeopardy isn’t oncology, and scanning an image is not reading clinical notes. There is no evidence whatsoever that what has gotten us, sort of, into the game of reading scans is going to bring us all the way into the promised land of a radiologist in an app any time soon. As Matthew Fenech, Co-founder and Chief Medical Officer @una_health put this morning, “to argue for radiologist replacement in anything less than the medium term is to fundamentally misunderstand their role.”

§

But these are just off the cuff tweets. Perhaps we can forgive their hasty generosity. I was even more astonished by a massive statistical mistake in deep learning’s favor in an article in one of the Nature journals, on the neuroscience of language.

The article is by (inter alia) some MetaAI researchers:

Ostensibly the result is great news for deep learning fans, revealing correlations between deep learning and human brain. The lead author claimed on Twitter in the same thread that there were “direct [emphasis added] links” between the “inner workings” of GPT2 and the human brain:

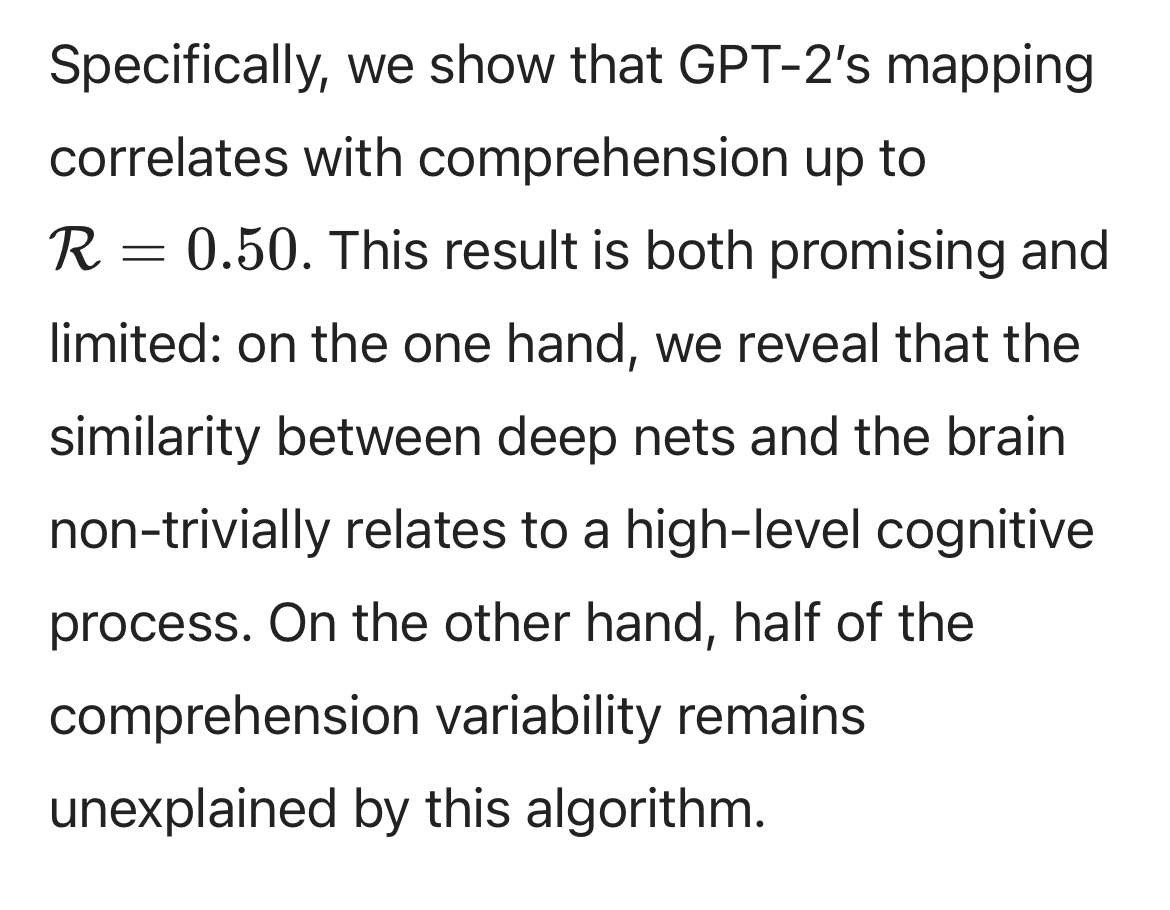

But the fine print matters; what we see is merely a correlation, and the correlation that is observed is decent but hardly decisive, R = 0.50.

That’s enough to get you published, but it also means there’s a lot that you don’t know. When two variables are correlated like that, it doesn’t mean A causes B (or vice versa); it doesn’t even mean they are in lockstep. It is akin to the magnitude of the correlation between height and weight; if I know your height and nothing else about you: I can make a slightly educated guess about your weight. I might be close, but I could also be off; there is certainly no guarantee.

The paper itself addresses this, but when it does, it makes a gaping mistake, erring, again on the side of attributing too much to deep learning. Here is what they say: (people who know their stats well might spot the error right away).

Uh onh As Stats 101 teaches us, the amount of variability explained is not R but rather R squared. So if you have a correlation of R = .5, you actually “explain” (really, just “predict”) only 25% of the variance—which means fully three-quarters (not half) of the variability remains unexplained. That’s a huge difference. (In a DM, I pointed out the error to the senior author, King, and he concurred, promising he would contact the journal to make a correction.)

Predicting a mere 25% of the variance means license to speculate, but it certainly doesn’t mean you have nailed the answer. In the end, all we really have is evidence that something that matters to GPT also matters to the brain (for example frequency and complexity), but we are very long way from saying that whatever is weakly correlated is actually functioning in the same way in both. It’s way too much charity to deep learning to claim that there is any kind of direct link.

Now here’s the thing. Stuff happens. Scientists are fallible, and good on the senior author for writing to the journal for correction. But the fact this slipped through the peer review process at a journal at Nature astounds me. What it says to me is that people liked the story, and didn’t read very carefully. (And, hello, reading carefully is the number one job of a peer reviewer. #youhadonejob)

When that happens, when reviewers like the story but don’t read critically, it says that they are voting with their hearts, and not their brains.

As I asked on Twitter, “If Optimus could solve all the motor control problems Tesla aims to solve, and we had to now install a “general intelligence” in it, in order to make it a safe and useful domestic humanoid robot, what would we install?”

The answer is that at present we have no viable option; AlphaStar and LLMs would surely be unsafe and inadequate; and we don’t yet have anything markedly better. We can’t really build humanoid domestic robots without general intelligence, and we don’t yet have any serious candidates. No probably about it.

It's fascinating that the authors conclude that GPT-2 "may be our best model of language representations in the brain" when really what they have is a 25% correlation between one layer of GPT-2 and one aspect of the data. If they mean "our best (simulated) model," then I guess they might have a point, although it's hard to know what being the best AI model of any cognitive process is worth at this point. If they mean "best model (period)," that's quite the claim.

Another good post to point out problems with the AI reporting out there.

You make it your business to point out errors (generally especially with respect to unsupported claims). But such 'facts' do not convince people. It is the other way around (as psychological research has shown): convictions influence what we accept (or even notice) as 'facts' much more than the other way around. AI hype is just as many other human convictions — especially extreme ones — rather fact- and logic-resistant.

What AI-hype is thus illustrating is — ironically enough — not so much the power of digital AI, but the weakness of humans.

Our convictions stem from reinforcement, indeed a bit like ML. For us it is about what we hear/experience often or hear from a close contact. That is not so different from the 'learning' of ML (unsupervised/supervised). That analogy leads ML/AI-believers to assume that it must be possible to get something that has the same 'power' that we do. Symbolic AI's hype was likewise built on an assumption/conviction, namely that intelligence was deep down based on logic and facts (a conviction that resulted from "2500 years of footnotes to Plato"). At some point, the lack of progress will break that assumption. You're just recognising it earlier than most and that is not a nice situation to be in. Ignorance is ...